Decoder-Only Transformers, ChatGPTs specific Transformer, Clearly Explained!!!

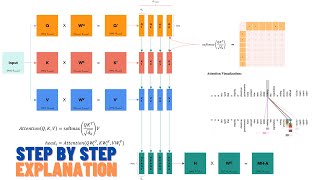

Transformers are taking over AI right now, and quite possibly their most famous use is in ChatGPT. ChatGPT uses a specific type of Transformer called a Decoder-Only Transformer, and this StatQuest shows you how they work, one step at a time. And at the end (at 32:14), we talk about the differences between a Normal Transformer and a Decoder-Only Transformer. BAM!

NOTE: If you're interested in learning more about Backpropagation, check out these 'Quests:

The Chain Rule: https://youtu.be/wl1myxrtQHQ

Gradient Descent: https://youtu.be/sDv4f4s2SB8

Backpropagation Main Ideas: https://youtu.be/IN2XmBhILt4

Backpropagation Details Part 1: https://youtu.be/iyn2zdALii8

Backpropagation Details Part 2: https://youtu.be/GKZoOHXGcLo

If you're interested in learning more about the SoftMax function, check out:

https://youtu.be/KpKog-L9veg

If you're interested in learning more about Word Embedding, check out: https://youtu.be/viZrOnJclY0

If you'd like to learn more about calculating similarities in the context of neural networks and the Dot Product, check out:

Cosine Similarity: https://youtu.be/e9U0QAFbfLI

Attention: https://youtu.be/PSs6nxngL6k

If you'd like to learn more about Normal Transformers, see: https://youtu.be/zxQyTK8quyY

For a complete index of all the StatQuest videos, check out:

https://statquest.org/video-index/

If you'd like to support StatQuest, please consider...

Patreon: https://www.patreon.com/statquest

...or...

YouTube Membership: https://www.youtube.com/channel/UCtYLUTtgS3k1Fg4y5tAhLbw/join

...buying one of my books, a study guide, a t-shirt or hoodie, or a song from the StatQuest store...

https://statquest.org/statquest-store/

...or just donating to StatQuest!

https://www.paypal.me/statquest

Lastly, if you want to keep up with me as I research and create new StatQuests, follow me on twitter:

https://twitter.com/joshuastarmer

0:00 Awesome song and introduction

1:34 Word Embedding

7:26 Position Encoding

10:10 Masked Self-Attention, an Autoregressive method

22:35 Residual Connections

23:00 Generating the next word in the prompt

26:23 Review of encoding and generating the prompt

27:20 Generating the output, Part 1

28:46 Masked Self-Attention while generating the output

30:40 Generating the output, Part 2

32:14 Normal Transformers vs Decoder-Only Transformers

#StatQuest